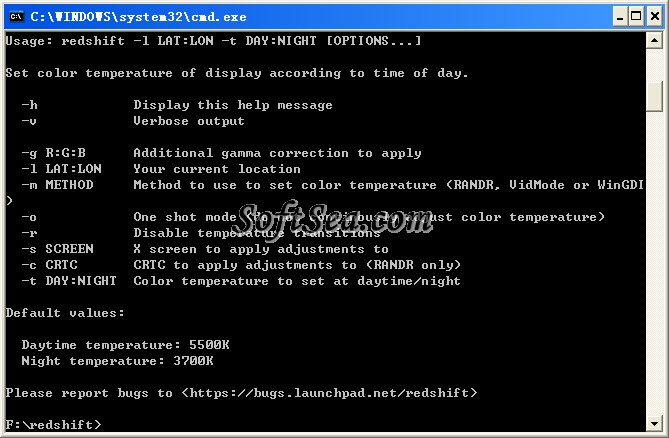

IN the below example you can see a new catalog for Redshift Database got initiated called “ my_redshift. $./presto-cli.jar -server -catalog bigquery -schema -user -password Step 4: Check for available datasets, schemas and tables, etc and run SQL queries with Presto Client to access Redshift databaseĪfter successfully database connection with Amazon Redshift, You can connect to Presto CLI and run following queries and make sure that the Redshift catalog gets picked up and perform show schemas and show tables to understand available data. This is how my catalog properties look like – my_redshift.properties: |Ĭonnection-url=jdbc:postgresql://.:5439/dev Create the file with the following contents, replacing the connection properties as appropriate for your setup: connection-password=secretĬonnection-url=jdbc:postgresql://:5439/database Step 3: Configure Presto Catalog for Amazon Redshift ConnectorĪt Ahana we have simplified this experience and you can do this step in a few minutes as explained in these instructions.Įssentially, to configure the Redshift connector, create a catalog properties file in etc/catalog named, for example, redshift.properties, to mount the Redshift connector as the redshift catalog. If your Presto Compute Plane VPC and data sources are in a different VPC then you need to configure a VPC peering connection. In simple words, Security Group settings of Redshift database play a role of a firewall and prevent inbound database connections over port 5439.Find the assigned Security Group and check its Inbound rules. So even if you have created your Amazon Redshift cluster in a public VPC, the security group assigned to the target Redshift cluster can prevent inbound connections to the database cluster.

You can skip this section if you want to use your existing Redshift cluster, just make sure your redshift cluster is accessible from Presto, because AWS services are secure by default. Step 2: Setup a Amazon Redshift clusterĬreate an Amazon Redshift cluster from AWS Console and make sure it’s up and running with dataset and tables as described here.īelow screen shows Amazon Redshift cluster – “ redshift-presto-demo”įurther, JDBC URL from Cluster is required to setup a redshift connector with Presto. Set up your own Presto cluster on Kubernetes using our Presto on Kubernetes tutorial or you can use Ahana’s managed service for Presto. How to Run SQL Queries in Redshift with Presto Step 1: Setup a Presto cluster with Kubernetes This can be used to join data between different systems like Redshift and Hive, or between two different Redshift clusters. Presto’s Redshift connector allows conducting SQL queries on the data stored in an external Amazon Redshift cluster. This tutorial is about how to run SQL queries with Presto (running with Kubernetes) on AWS Redshift. Presto has evolved into a unified engine for SQL queries on top of cloud data lakes for both interactive queries as well as batch workloads with multiple data sources.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed